Automated farm boundary detection using neural networks

Imagine the endless possibilities if we have satellite imagery across the globe and could detect farm boundaries. The plot size data is not readily available, and acquiring it is often difficult in many countries. This data is of great interest.

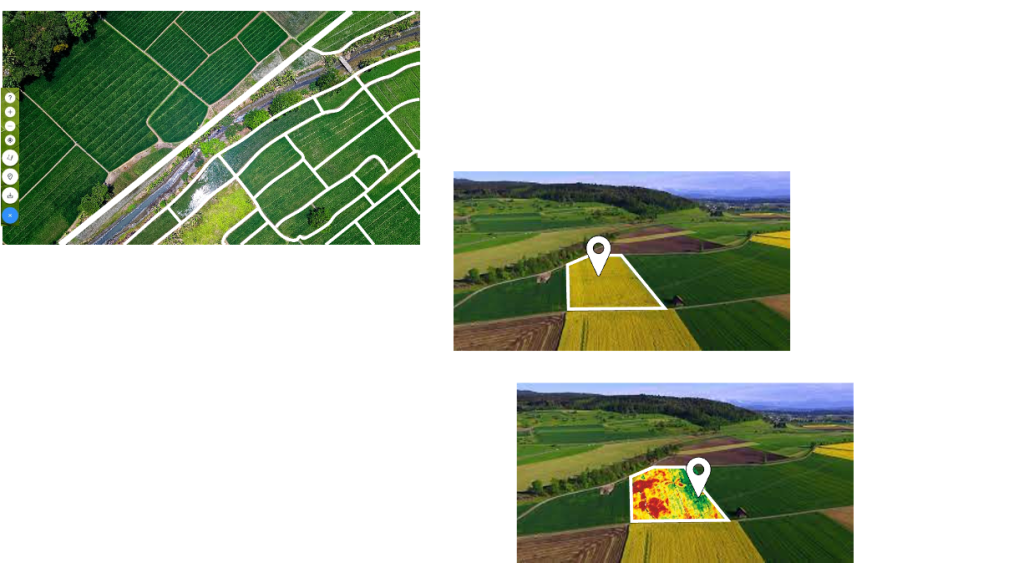

GISKernel’s automated farm boundary detection platform provides a cost-effective and accurate solution for your problems. Using our platform to automate the process of farm boundary detection, can not only save your time and resources, but also give you more accurate and reliable boundary maps.

Be it resilient agriculture or precision farming, farm boundary detection is an inevitable prerequisite. Over the years, AI has transformed the agricultural industry in many ways. Farming, especially on a large scale, requires AI solutions that will not only increase the efficiency of farmers but also improve the productivity of the crop. The AI application cases in this field of agriculture include weed detection, animal monitoring, boundary detection and field surveillance.

It is quite challenging to achieve farm boundary detection using Google Earth images. On Google Earth, we can see that the farm boundaries are often indistinguishable, making them hard to detect. We have accepted this challenging task and achieved it using Google Earth Satellite images. For this, we decided to go for a neural network-based deep learning model.

We developed an Automatic Field Delineation or Automatic Farm Boundary detection model, which can detect irregular patterns and small parcels of farms. We have used a CNN-based deep learning model called YOLO V8. Given an input satellite image, our model can output the delineated boundaries between farm parcels. (check the mesmerizing video below!)

Let us look into how the farm boundary detection model can serve several vital purposes in agriculture. Before diving into the details, let us consider its core practical applications.

- We can understand field management history.

- We can monitor trends and quantify the impacts on the fields.

- We can simplify the process of farm management and understand the input needs.

All three applications demand a blend of ML expertise and profound knowledge of agriculture. The first and second applications diverge in temporal requirements. The first one requires a long-term view, accommodating historical field management. The second and third applications emphasize the current farming practices and contextual experiences. However, all the applications leverage satellite-derived field boundaries.

Here are some of the use cases where our model can be valuable:

Precision Agriculture: Our farm boundary detection model will enable precision agriculture by delineating the exact boundaries of the farm. This information allows farmers to optimize the use of resources such as water, fertilizers, and pesticides, reducing waste and increasing efficiency.

Crop Monitoring: Our boundary detection model can help analyze remote sensing images, allowing farmers to monitor crop health, detect anomalies, and take corrective actions.

Automated Machinery and Navigation: Farm machinery and robots often rely on accurate boundary information to navigate the field autonomously. By detecting and defining farm boundaries, our model contributes to developing and deploying autonomous agricultural machinery, improving operational efficiency.

Insurance Assessment: Insurance companies use farm boundary information to assess and manage risks related to crop insurance. Accurate delineation of farm boundaries helps in determining the insured area and assessing potential risks.

Security and Surveillance: Monitoring farm boundaries can enhance security by detecting any unauthorized access or activities on the farm. This is particularly important for large farms or those in remote locations.

Now that you have a brief overview of our product and its capabilities, without further adieu, contact us to discuss more about it.